In this article, we’ll cover the introduction of distributed tracing in Betfair. First, we outline our problems with monitoring and logs, and explain why we think tracing is important. Then we detail the steps we took to enable tracing the bet placement in our platform. If you are looking for an open source implementation of a distributed tracing system, we propose you to check out https://www.jaegertracing.io/ . Jaeger is also our choice here in Betfair. Yuri Shkuro has written a great book on the subject.

Why Add Distributed Tracing?

Let us go back to microservices. Adopting this architecture has been a perfect fit for Betfair Exchange, providing our bet matching platform with scalability and reliability. Individual components are simpler, which adds to our developers’ productivity.

On the other hand, operating the platform as a whole is harder. For us, this is first and foremost the ability to troubleshoot bet operations.

The three sources for problem solving in modern microservices are: 1) monitoring; 2) logs; and 3) traces. Up until this point, we have the first two pillars.

Monitoring notifies us, among others, when the latency in bet placement sees a spike. Unfortunately, this kind of aggregated metrics lack the context for troubleshooting why there is a spike in the first place. Adding to that, first alerts often come from components that are not the culprit.

Following logs in our distributed systems is subtle. The bet jumps not only between threads and executors, but also distributed components - and in our case even the database. A solution is stamping log lines with a unique identifier for the bet. Splunk collects all the logs and enables searching. With the logs, one still has to keep in mind that the sequence of actions can appear wrong, as timestamps from different servers have clock skews. For troubleshooting, there is no visual picture and one has to keep a lot of pieces in mind.

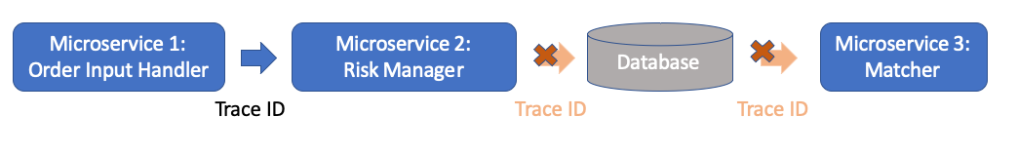

Distributed tracing records all the activities performed on a request as it passes through the platform. The unique bet identifier now becomes the trace identifier that gets passed around together with calling sequence, even when one service calls another over the network. Later, all the data related to the bet is aggregated by this identifier to a trace that shows us exactly how the system processed the request. Tracing adds a visual view into a single bet. We end up with a macro view of our critical components, with the ability to zoom in to a component that we suspect is causing a failure or performance issue.

Say Hello!

Our internal microservices framework provided a tracing client out of the box. This would be perfect to track the bet requests as they pass through the critical path in our system. Unfortunately for us, it was not feasible to add the trace identifier across our database where the stored procedures pass the bet request data around. This is depicted below.

Instead, we opted to mix the logs with tracing, to get the full picture of the bet flow.

Different services log all the relevant data about the bet. Then our new aggregator component reads those logs and stitches the data together into a trace. The bet data that is now normalized into the trace format gets sent to a tracing collector that in turn stores it in a database. The tracing frameworks provide the UI tools to have a look at a saved trace whenever we want.

As an interesting side note, we are not down-sampling our bet traces. This gives us the ability to go back any time to get another insight into a customer complaint about a bet.

Conclusions

In this article, we looked at adding tracing to the bet flow through our platform in Betfair. We explained why tracing is an important addition to monitoring and logs. We showed how we overcame restrictions imposed by our current system design that cannot pass tracing metadata. In the next article, we’ll discuss the benefits we get from tracing.