By

Alex Cioflica, Cristian Bote and Tiberiu Krisboi

Just a few days before the World Cup started, we sequentially released a major rework of all our Gaming sites covering both redesigning all the products and a complete under the hood revamp. Rebuilding our technology stack was a massive effort across multiple teams and departments, ranging from Product, Design and Marketing to Tech, Content, SEO and the list goes on.

In this lengthy article we’ll talk about our journey through choosing the right technology, learning from different mistakes and ultimately achieving what we set out to do.

Previously…

We’re part of the Gaming teams which work on several products (including Casino, Arcade, Games) across 2 different brands (Paddy Power and Betfair).

Prior to the revamp, the products were spread across multiple codebases:

- There was an application that served the mobile versions of the products, and which was written over an in-house framework based on Java Spring, Freemarker templates and jQuery;

- There was another application using a similar technology stack, that served the desktop sites;

- There was also a single page application written in Angular 1.6, obviously having a client-side rendering approach;

- Finally, another single page application, this time written in Angular 4.

In a nutshell, there were multiple tech stacks and multiple rendering modes. This can’t be good, can it? Not really. It actually led to several complications; one of them was that adding new features was expensive because it had to be implemented and duplicated in several codebases. Another one was related to SEO, which wasn’t in the best shape possible, as there were ranking penalties applied due to the separate sites, different structure, but similar content for the mobile and for the desktop platform.

For the client-side rendered sites (the ones built on Angular), SEO is a pretty tricky issue to tackle as the search engines don’t know how to interpret or are limited in executing the JavaScript code that is usually executed by the browser. For example, “the rendering of JavaScript-powered websites in Google search is deferred until Googlebot has resources available to process that content” - Google I/O 2018 (video). There is a workaround accepted by search engines, which most client-side apps use:

- The first step is to prerender periodically all of the pages,

- Then cache the generated response

- And whenever a search bot tries to crawl a page, we served the pre-generated and cached content.

While ultimately it is a working solution, it remains an overhead that needs to be maintained, making it not very suitable for the long term.

Besides SEO, another complication was around the amount of data and size of the scripts on the Angular sites which was getting out of control. We had a really tough time lowering the footprint, so it can be served as fast as possible to the end user.

Overall it was pretty difficult to maintain and further develop all the products. The conclusion was clear: a rebuild from the ground up of our apps was necessary.

Several main goals were coined:

- ⏱ Fast and performant. More specifically the products need to be fastest in the industry

- 📐 Of course, the sites must be responsive / adaptive

- 🌍 The codebase needed to serve multiple brands and multiple products consistently but at the same time to have the possibility for each product to have its own personality

- 🔍 SEO built in its core

- 💗 Easy to scale and to extend

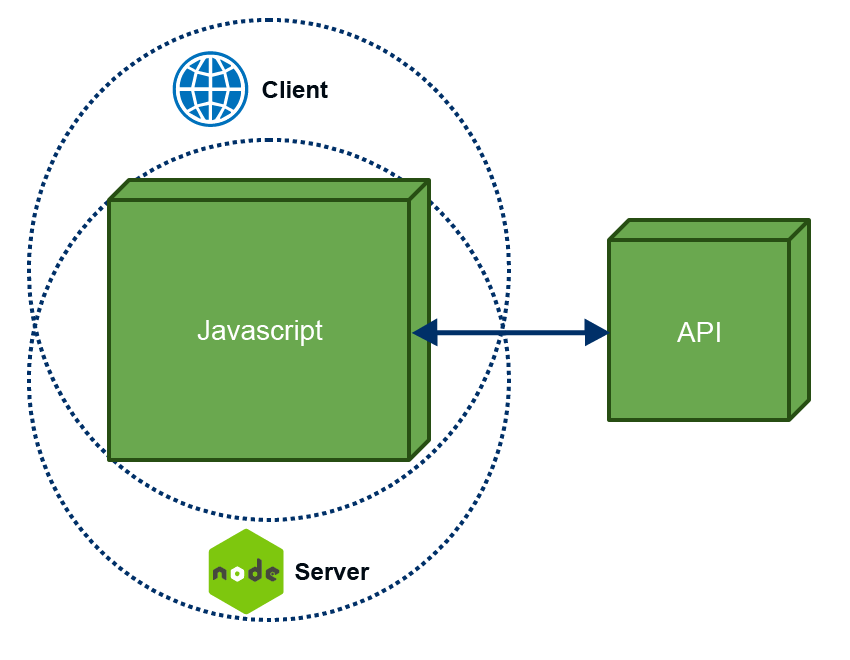

Isomorphic

We had experience working with a classical Java web application, where a feature has one code on the server, another code in the browser, thus having to code the feature in two coding languages. We also had experience having a full JavaScript app, where the code was fully interpreted and executed on the browser side, making our apps really dependent on the performance of the customer’s device. With this in mind, and looking at the goals we wanted to achieve, early on in the revamp process we identified the need for an isomorphic application.

In an isomorphic app, the same code can be run both on the server and the client side, making it easier to maintain and faster to develop. The first request made by the browser is rendered and served by the server, while subsequent navigation on the site is processed on the client-side.

As a potential downside, you always need to be careful where your code will run. Some functionality won’t work on the server, and some won’t work on the browser. For example, reading the width of an element should be done on the client-side since on the server you don’t have the DOM. Later on, we’ll talk about some lessons learn.

Isomorphic isn’t a new concept, it’s been around since 2011 and has gained a lot of traction in the last few years. There are several frameworks the help you achieve what you want and there are really good resources out there that can help you ramp up an app in no time.

Study, study, study

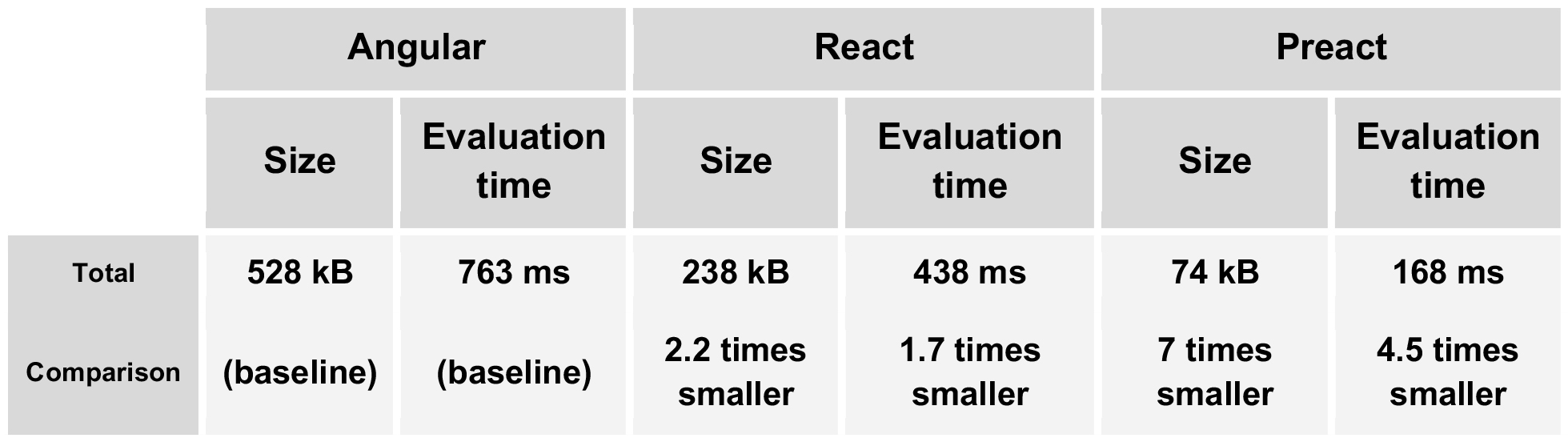

Now, which framework to use? We shortlisted a few web frameworks/libraries that could fit our purpose. With each, we developed a proof of concept, such that we can compare apples to apples and pick the right one for our use case. As a control, the target was to build and analyse the exact same page and analyse it, with the following specs:

- Responsive layout for cross-platform compatibility

- Image heavy

- Containing a huge number of DOM nodes

- Backend calls to some microservices

The outcome was measured in same conditions: it was tested in Chrome with a mid-range device CPU, and a 3G fast connection emulation.

The evaluation times and sizes were extracted from Chrome, but it was also very important to look at other performance metrics, like the number of painting and repaintings, number of nodes, etc. After all the metrics were gathered, we undertook another analysis to identify the pros and cons of each solution.

We also looked at the JavaScript footprint size of the application. There are several reasons one should also look at this:

- First of all, the browser is not processing scripts in parallel

- Only one thread is used for script execution (this could be mitigated with workers, but they’re not supported by all the browsers)

- Let’s not forget that the scripts can be processed only after they’re loaded, so bigger size, gets you a bigger download time.

- Interpreting and processing is using client’s CPU which can be a problem for low resource devices like smartphones

Overall the frameworks we tested were Angular 6, React 16.5, Preact 8.3 (and Vue.js was slightly tested).

And there were quite some interesting results.

Angular was the largest and slowest, React almost half that, but Preact was flying!

The metrics were obviously leaning towards choosing Preact, but that wasn’t enough. Some of the other aspects we looked at:

- Server-side rendering support, and the maturity of the SSR solution

- The licensing models

- The expected learning curve (as new joiners and other teams need to have a quick ramp up)

- The documentation, in terms of completeness and easy to read

- If the community is active

And the winner is…

Preact was the winner for us. It’s fast, small (just 3kB in size) and compatible with React. Thus, benefiting of the knowledge base of a big and active React community. Furthermore, it has a smooth learning curve for new joiners. More and more companies are using it. And it’s one of the fastest vDOM libraries out there.

The team behind Preact is a really dedicated and active team that work endlessly to improve the already great performing library, but also to shrinking it to an even smaller size, while keeping the functionality. These days, they are preparing to release Preact X, which shaves 0.5kB of its size, which was already really low at just 3.5kB.

Fireworks 🎉

After blood, sweat, and tears, everything was launched in production. With really good overall results and nailed all the requirements:

- A suite of speedy and performant products

- Responsive/adaptive websites

- Huge SEO and page ranking improvements

- Multiple products served on the same codebase

Customers were happy, teams were happy, marketing was happy; what more can you ask for?

Lesson learned: Number of requests

Of course, not everything was perfect. On our way to production we faced a few challenges, a few are worth mentioning.

Running the project locally or on development environment with low traffic everything was fast, and the performance was good. But we needed to simulate production conditions, so we did stress tests using Gatling. The results were very disappointing.

The simulation was made on a page that aggregated data from several sources (amongst them an external content API, account micro-services and others) with 10 active users, making 20 requests per second. We’ve noticed that after one minute the application was struggling to respond, and if initially the response time was around 1 second, progressively it got up to 2-3 seconds.

We’ve identified 2 main reasons. One of them was that there was absolutely no caching of the data sources that are almost static, like content. To tackle this, we’ve developed a mechanism that can check fast if something is changed, without fetching the whole content. And we’ve implemented a cache mechanism using Memcached which is an in-memory, key-value data store. Being in-memory, it’s lightning fast. If the content changes, we’ll notice this through the first call, then burst the cache and get a fresh batch of data.

Caching really improved our application, and for a longer period of time the response time improved and it was consistent to around 0.8 seconds.

But something still wasn’t quite right, the time should have been even smaller. We dug deeper and we’ve noticed that the application was creating too many connections to the external services in the same time. Even if this doesn’t sound so bad, what it actually does, it blocks the underlaying system’s connection and no new connection is established until the first are destroyed.

The root cause was the request module, which by default, it used the basic settings for the request agent, which doesn’t limit the number of active connection to a server. The solution was pretty obvious… use agents! For this, we used agentkeepalive module and created an agent instance for each service. With a lot of tweaks and performance testing over and over again, we were happy with the result.

Overall, with these improvements in place the performance was much better. And almost 30% of the response time was shaved off, and there were no spikes in the measurements over time.

To be continued…

As a summary, each use case needs its own and fit solution, for this you need to study and identify what is best for you. It’s different from project to project. For some projects, you might go on the beaten path and choose the technology in which your teams are most experienced with - this will mean faster time to market, but it might bring you downsides like aging, unmaintained technology, lack of performance update, and so on. For projects, you might want to use the coolest, latest, trendiest technology, but just don’t jump right into it, as it might not suit your needs. To help you with this, take some time (but still timebox it) and do some proof of concepts. The time invested at the beginning of the project will be beneficial in the long run. There’s no silver bullet, you must break down and put in balance all the pros and cons when choosing a technology.

Right now, our products are evolving and getting more and more complex. And while we keep adding new features, the performance and on the speed of our apps is still in our focus.

This is the first stop in our journey. In our next article we’ll talk more about how we deal with the increasing codebase and how we ship everything to production.

References and further read on this subject

- Google Developers - Rendering on the Web

- Airbnb - Isomorphic JavaScript: The Future of Web Apps

- A React And Preact Progressive Web App Performance Case Study: Treebo

- Nodejitsu - Scaling Isomorphic Javascript Code

- An Introduction to Isomorphic Web Application Architecture

- Isomorphic, SSR App Tutorial Made Simple: React.js, react-router, node.js with state

- Isomorphism vs Universal JavaScript

- Hackernoon - Get an isomorphic web app up and running in 5 minutes

- Google Developers - Mobile SEO Overview

- Elephate - Chrome 41: The Key to Successful Website Rendering

- Going Beyond Google: Are Search Engines Ready for JavaScript …

- PPB’s Technology Blog - Why look at emerging technology?